|

JavaScript-powered websites are here to stay. As JavaScript in its many frameworks becomes an ever more popular resource for modern websites, SEOs must be able to guarantee their technical implementation is search engine-friendly. In this article, we will focus on how to optimize JS-websites for Google (although Bing also recommends the same solution, dynamic rendering). The content of this article includes: 1. JavaScript challenges for SEO 2. Client-side and server-side rendering 3. How Google crawls websites 4. How to detect client-side rendered content 5. The solutions: Hybrid rendering and dynamic rendering 1. JavaScript challenges for SEOReact, Vue, Angular, Node, and Polymer. If at least one of these fancy names rings a bell, then most likely you are already dealing with a JavaScript-powered website. All these JavaScript frameworks provide great flexibility and power to modern websites. They open a large range of possibilities in terms of client-side rendering (like allowing the page to be rendered by the browser instead of the server), page load capabilities, dynamic-content, user-interaction, and extended functionalities. If we only look at what has an impact on SEO, JavaScript frameworks can do the following for a website:

Unfortunately, if implemented without using a pair of SEO lenses, JavaScript frameworks can pose serious challenges to the page performance, ranging from speed deficiencies to render-blocking issues, or even hindering crawlability of content and links. There are many aspects that SEOs must look after when auditing a JavaScript-powered web page, which can be summarized as follows:

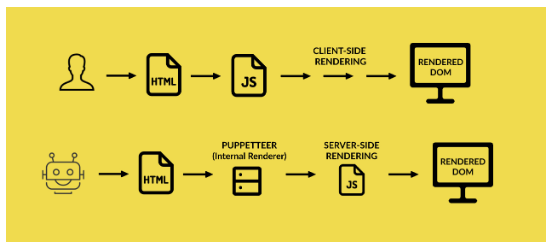

A lot of questions to answer. So where should an SEO start? Below are key guidelines to the optimization of JS-websites, to enable the usage of these frameworks while keeping the search engine bots happy. 2. Client-side and server-side rendering: The best “frenemies”Probably the most important pieces of knowledge all SEOs need when they have to cope with JS-powered websites is the concepts of client-side and server-side rendering. Understanding the differences, benefits, and disadvantages of both are critical to deploying the right SEO strategy and not getting lost when speaking with software engineers (who eventually are the ones in charge of implementing that strategy). Let’s look at how Googlebot crawls and indexes pages, putting it as a very basic sequential process:

1. The client (web browser) places several requests to the server, in order to download all the necessary information that will eventually display the page. Usually, the very first request concerns the static HTML document. 2. The CSS and JS files, referred to by the HTML document, are then downloaded: these are the styles, scripts and services that contribute to generating the page. 3. The Website Rendering Service (WRS) parses and executes the JavaScript (which can manage all or part of the content or just a simple functionality).

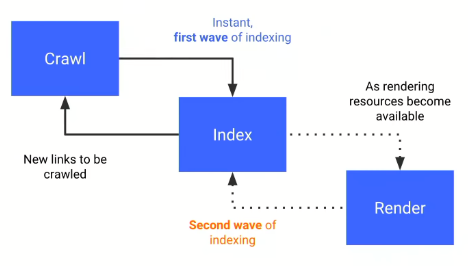

4. Caffeine (Google’s indexer) indexes the content found New links are discovered within the content for further crawling This is the theory, but in the real world, Google doesn’t have infinite resources and has to do some prioritization in the crawling. 3. How Google actually crawls websitesGoogle is a very smart search engine with very smart crawlers. However, it usually adopts a reactive approach when it comes to new technologies applied to web development. This means that it is Google and its bots that need to adapt to the new frameworks as they become more and more popular (which is the case with JavaScript). For this reason, the way Google crawls JS-powered websites is still far from perfect, with blind spots that SEOs and software engineers need to mitigate somehow. This is in a nutshell how Google actually crawls these sites:

The above graph was shared by Tom Greenaway in Google IO 2018 conference, and what it basically says is – If you have a site that relies heavily on JavaScript, you’d better load the JS-content very quickly, otherwise we will not be able to render it (hence index it) during the first wave, and it will be postponed to a second wave, which no one knows when may occur. Therefore, your client-side rendered content based on JavaScript will probably be rendered by the bots in the second wave, because during the first wave they will load your server-side content, which should be fast enough. But they don’t want to spend too many resources and take on too many tasks. In Tom Greenaway’s words:

Implications for SEO are huge, your content may not be discovered until one, two or even five weeks later, and in the meantime, only your content-less page would be assessed and ranked by the algorithm. What an SEO should be most worried about at this point is this simple equation: No content is found = Content is (probably) hardly indexable And how would a content-less page rank? Easy to guess for any SEO. So far so good. The next step is learning if the content is rendered client-side or server-side (without asking software engineers). 4. How to detect client-side rendered contentOption one: The Document Object Model (DOM)There are several ways to know it, and for this, we need to introduce the concept of DOM. The Document Object Model defines the structure of an HTML (or an XML) document, and how such documents can be accessed and manipulated. In SEO and software engineering we usually refer to the DOM as the final HTML document rendered by the browser, as opposed to the original static HTML document that lives in the server. You can think of the HTML as the trunk of a tree. You can add branches, leaves, flowers, and fruits to it (that is the DOM). What JavaScript does is manipulate the HTML and create an enriched DOM that adds up functionalities and content. In practice, you can check the static HTML by pressing “Ctrl+U” on any page you are looking at, and the DOM by “Inspecting” the page once it’s fully loaded. Most of the times, for modern websites, you will see that the two documents are quite different. Option two: JS-free Chrome profileCreate a new profile in Chrome and disallow JavaScript through the content settings (access them directly here – Chrome://settings/content). Any URL you browse with this profile will not load any JS content. Therefore, any blank spot in your page identifies a piece of content that is served client-side. Option three: Fetch as Google in Google Search ConsoleProvided that your website is registered in Google Search Console (I can’t think of any good reason why it wouldn’t be), use the “Fetch as Google” tool in the old version of the console. This will return a rendering of how Googlebot sees the page and a rendering of how a normal user sees it. Many differences there? Option four: Run Chrome version 41 in headless mode (Chromium)Google officially stated in early 2018 that they use an older version of Chrome (specifically version 41, which anyone can download from here) in headless mode to render websites. The main implication is that a page that doesn’t render well in that version of Chrome can be subject to some crawling-oriented problems. Option five: Crawl the page on Screaming Frog using GooglebotAnd with the JavaScript rendering option disabled. Check if the content and meta-content are rendered correctly by the bot. After all these checks, still, ask your software engineers because you don’t want to leave any loose ends. 5. The solutions: Hybrid rendering and dynamic renderingAsking a software engineer to roll back a piece of great development work because it hurts SEO can be a difficult task. It happens frequently that SEOs are not involved in the development process, and they are called in only when the whole infrastructure is in place. We SEOs should all work on improving our relationship with software engineers and make them aware of the huge implications that any innovation can have on SEO. This is how a problem like content-less pages can be avoided from the get-go. The solution resides on two approaches. Hybrid renderingAlso known as Isomorphic JavaScript, this approach aims to minimize the need for client-side rendering, and it doesn’t differentiate between bots and real users. Hybrid rendering suggests the following:

Dynamic renderingThis approach aims to detect requests placed by a bot vs the ones placed by a user and serves the page accordingly.

The best of both worldsCombining the two solutions can also provide great benefit to both users and bots.

ConclusionAs the use of JavaScript in modern websites is growing every day, through many light and easy frameworks, it requires software engineers to solely rely on HTML to please search engine bots which are not realistic nor feasible. However, the SEO issues raised by client-side rendering solutions can be successfully tackled in different ways using hybrid rendering and dynamic rendering. Knowing the technology available, your website infrastructure, your engineers, and the solutions can guarantee the success of your SEO strategy even in complicated environments such as JavaScript-powered websites.

Giorgio Franco is a Senior Technical SEO Specialist at Vistaprint.

The post A survival kit for SEO-friendly JavaScript websites appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/04/09/a-survival-kit-for-seo-friendly-javascript-websites/ from http://risingphoenixseo.blogspot.com/2019/04/a-survival-kit-for-seo-friendly.html

0 Comments

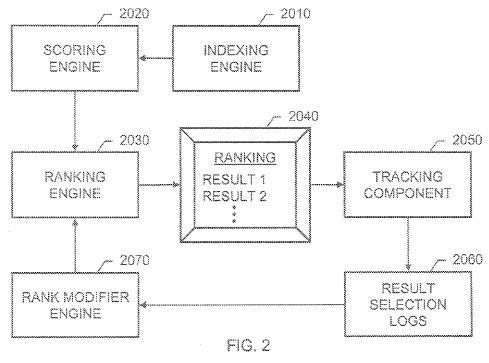

When SEO Was EasyWhen I got started on the web over 15 years ago I created an overly broad & shallow website that had little chance of making money because it was utterly undifferentiated and crappy. In spite of my best (worst?) efforts while being a complete newbie, sometimes I would go to the mailbox and see a check for a couple hundred or a couple thousand dollars come in. My old roommate & I went to Coachella & when the trip was over I returned to a bunch of mail to catch up on & realized I had made way more while not working than what I spent on that trip. What was the secret to a total newbie making decent income by accident? Horrible spelling. Back then search engines were not as sophisticated with their spelling correction features & I was one of 3 or 4 people in the search index that misspelled the name of an online casino the same way many searchers did. The high minded excuse for why I did not scale that would be claiming I knew it was a temporary trick that was somehow beneath me. The more accurate reason would be thinking in part it was a lucky fluke rather than thinking in systems. If I were clever at the time I would have created the misspeller's guide to online gambling, though I think I was just so excited to make anything from the web that I perhaps lacked the ambition & foresight to scale things back then. In the decade that followed I had a number of other lucky breaks like that. One time one of the original internet bubble companies that managed to stay around put up a sitewide footer link targeting the concept that one of my sites made decent money from. This was just before the great recession, before Panda existed. The concept they targeted had 3 or 4 ways to describe it. 2 of them were very profitable & if they targeted either of the most profitable versions with that page the targeting would have sort of carried over to both. They would have outranked me if they targeted the correct version, but they didn't so their mistargeting was a huge win for me. Search Gets ComplexSearch today is much more complex. In the years since those easy-n-cheesy wins, Google has rolled out many updates which aim to feature sought after destination sites while diminishing the sites which rely one "one simple trick" to rank. Arguably the quality of the search results has improved significantly as search has become more powerful, more feature rich & has layered in more relevancy signals. Many quality small web publishers have went away due to some combination of increased competition, algorithmic shifts & uncertainty, and reduced monetization as more ad spend was redirected toward Google & Facebook. But the impact as felt by any given publisher is not the impact as felt by the ecosystem as a whole. Many terrible websites have also went away, while some formerly obscure though higher-quality sites rose to prominence. There was the Vince update in 2009, which boosted the rankings of many branded websites. Then in 2011 there was Panda as an extension of Vince, which tanked the rankings of many sites that published hundreds of thousands or millions of thin content pages while boosting the rankings of trusted branded destinations. Then there was Penguin, which was a penalty that hit many websites which had heavily manipulated or otherwise aggressive appearing link profiles. Google felt there was a lot of noise in the link graph, which was their justification for the Penguin. There were updates which lowered the rankings of many exact match domains. And then increased ad load in the search results along with the other above ranking shifts further lowered the ability to rank keyword-driven domain names. If your domain is generically descriptive then there is a limit to how differentiated & memorable you can make it if you are targeting the core market the keywords are aligned with. There is a reason eBay is more popular than auction.com, Google is more popular than search.com, Yahoo is more popular than portal.com & Amazon is more popular than a store.com or a shop.com. When that winner take most impact of many online markets is coupled with the move away from using classic relevancy signals the economics shift to where is makes a lot more sense to carry the heavy overhead of establishing a strong brand. Branded and navigational search queries could be used in the relevancy algorithm stack to confirm the quality of a site & verify (or dispute) the veracity of other signals. Historically relevant algo shortcuts become less appealing as they become less relevant to the current ecosystem & even less aligned with the future trends of the market. Add in negative incentives for pushing on a string (penalties on top of wasting the capital outlay) and a more holistic approach certainly makes sense. Modeling Web Users & Modeling LanguagePageRank was an attempt to model the random surfer. When Google is pervasively monitoring most users across the web they can shift to directly measuring their behaviors instead of using indirect signals. Years ago Bill Slawski wrote about the long click in which he opened by quoting Steven Levy's In the Plex: How Google Thinks, Works, and Shapes our Lives

Of course, there's a patent for that. In Modifying search result ranking based on implicit user feedback they state:

If you are a known brand you are more likely to get clicked on than a random unknown entity in the same market. And if you are something people are specifically seeking out, they are likely to stay on your website for an extended period of time.

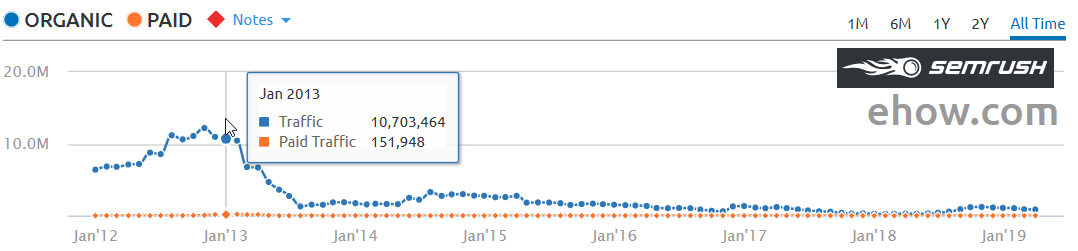

Attempts to manipulate such data may not work.

And just like Google can make a matrix of documents & queries, they could also choose to put more weight on search accounts associated with topical expert users based on their historical click patterns.

Google was using click data to drive their search rankings as far back as 2009. David Naylor was perhaps the first person who publicly spotted this. Google was ranking Australian websites for [tennis court hire] in the UK & Ireland, in part because that is where most of the click signal came from. That phrase was most widely searched for in Australia. In the years since Google has done a better job of geographically isolating clicks to prevent things like the problem David Naylor noticed, where almost all search results in one geographic region came from a different country. Whenever SEOs mention using click data to search engineers, the search engineers quickly respond about how they might consider any signal but clicks would be a noisy signal. But if a signal has noise an engineer would work around the noise by finding ways to filter the noise out or combine multiple signals. To this day Google states they are still working to filter noise from the link graph: "We continued to protect the value of authoritative and relevant links as an important ranking signal for Search." The site with millions of inbound links, few intentional visits & those who do visit quickly click the back button (due to a heavy ad load, poor user experience, low quality content, shallow content, outdated content, or some other bait-n-switch approach)...that's an outlier. Preventing those sorts of sites from ranking well would be another way of protecting the value of authoritative & relevant links. Best Practices Vary Across Time & By Market + CategoryAlong the way, concurrent with the above sorts of updates, Google also improved their spelling auto-correct features, auto-completed search queries for many years through a featured called Google Instant (though they later undid forced query auto-completion while retaining automated search suggestions), and then they rolled out a few other algorithms that further allowed them to model language & user behavior. Today it would be much harder to get paid above median wages explicitly for sucking at basic spelling or scaling some other individual shortcut to the moon, like pouring millions of low quality articles into a (formerly!) trusted domain. Nearly a decade after Panda, eHow's rankings still haven't recovered. Back when I got started with SEO the phrase Indian SEO company was associated with cut-rate work where people were buying exclusively based on price. Sort of like a "I got a $500 budget for link building, but can not under any circumstance invest more than $5 in any individual link." Part of how my wife met me was she hired a hack SEO from San Diego who outsourced all the work to India and marked the price up about 100-fold while claiming it was all done in the United States. He created reciprocal links pages that got her site penalized & it didn't rank until after she took her reciprocal links page down. With that sort of behavior widespread (hack US firm teaching people working in an emerging market poor practices), it likely meant many SEO "best practices" which were learned in an emerging market (particularly where the web was also underdeveloped) would be more inclined to being spammy. Considering how far ahead many Western markets were on the early Internet & how India has so many languages & how most web usage in India is based on mobile devices where it is hard for users to create links, it only makes sense that Google would want to place more weight on end user data in such a market. If you set your computer location to India Bing's search box lists 9 different languages to choose from. The above is not to state anything derogatory about any emerging market, but rather that various signals are stronger in some markets than others. And competition is stronger in some markets than others. Search engines can only rank what exists.

Impacting the Economics of PublishingNow search engines can certainly influence the economics of various types of media. At one point some otherwise credible media outlets were pitching the Demand Media IPO narrative that Demand Media was the publisher of the future & what other media outlets will look like. Years later, after heavily squeezing on the partner network & promoting programmatic advertising that reduces CPMs by the day Google is funding partnerships with multiple news publishers like McClatchy & Gatehouse to try to revive the news dead zones even Facebook is struggling with.

As mainstream newspapers continue laying off journalists, Facebook's news efforts are likely to continue failing unless they include direct economic incentives, as Google's programmatic ad push broke the banner ad:

Google is offering news publishers audience development & business development tools. Heavy Investment in Emerging Markets Quickly Evolves the MarketsAs the web grows rapidly in India, they'll have a thousand flowers bloom. In 5 years the competition in India & other emerging markets will be much tougher as those markets continue to grow rapidly. Media is much cheaper to produce in India than it is in the United States. Labor costs are lower & they never had the economic albatross that is the ACA adversely impact their economy. At some point the level of investment & increased competition will mean early techniques stop having as much efficacy. Chinese companies are aggressively investing in India.

RankBrainRankBrain appears to be based on using user clickpaths on head keywords to help bleed rankings across into related searches which are searched less frequently. A Googler didn't state this specifically, but it is how they would be able to use models of searcher behavior to refine search results for keywords which are rarely searched for. In a recent interview in Scientific American a Google engineer stated: "By design, search engines have learned to associate short queries with the targets of those searches by tracking pages that are visited as a result of the query, making the results returned both faster and more accurate than they otherwise would have been." Now a person might go out and try to search for something a bunch of times or pay other people to search for a topic and click a specific listing, but some of the related Google patents on using click data (which keep getting updated) mentioned how they can discount or turn off the signal if there is an unnatural spike of traffic on a specific keyword, or if there is an unnatural spike of traffic heading to a particular website or web page. And, since Google is tracking the behavior of end users on their own website, anomalous behavior is easier to track than it is tracking something across the broader web where signals are more indirect. Google can take advantage of their wide distribution of Chrome & Android where users are regularly logged into Google & pervasively tracked to place more weight on users where they had credit card data, a long account history with regular normal search behavior, heavy Gmail users, etc. Plus there is a huge gap between the cost of traffic & the ability to monetize it. You might have to pay someone a dime or a quarter to search for something & there is no guarantee it will work on a sustainable basis even if you paid hundreds or thousands of people to do it. Any of those experimental searchers will have no lasting value unless they influence rank, but even if they do influence rankings it might only last temporarily. If you bought a bunch of traffic into something genuine Google searchers didn't like then even if it started to rank better temporarily the rankings would quickly fall back if the real end user searchers disliked the site relative to other sites which already rank. This is part of the reason why so many SEO blogs mention brand, brand, brand. If people are specifically looking for you in volume & Google can see that thousands or millions of people specifically want to access your site then that can impact how you rank elsewhere. Even looking at something inside the search results for a while (dwell time) or quickly skipping over it to have a deeper scroll depth can be a ranking signal. Some Google patents mention how they can use mouse pointer location on desktop or scroll data from the viewport on mobile devices as a quality signal. Neural MatchingLast year Danny Sullivan mentioned how Google rolled out neural matching to better understand the intent behind a search query.

The above Tweets capture what the neural matching technology intends to do. Google also stated:

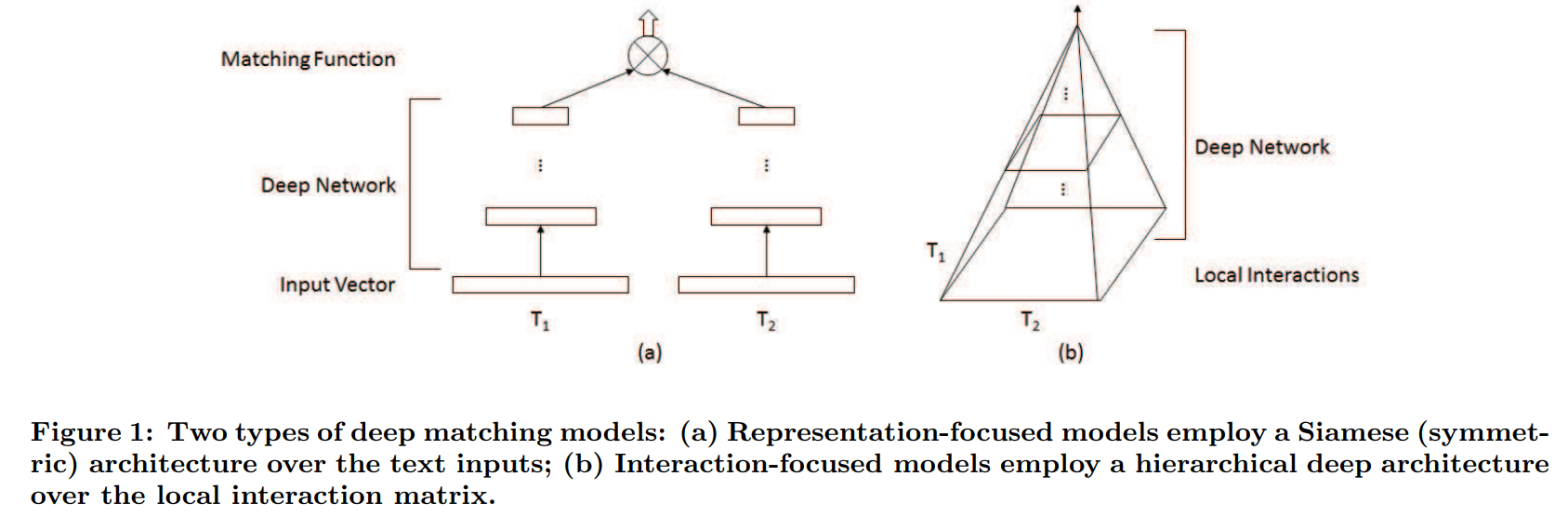

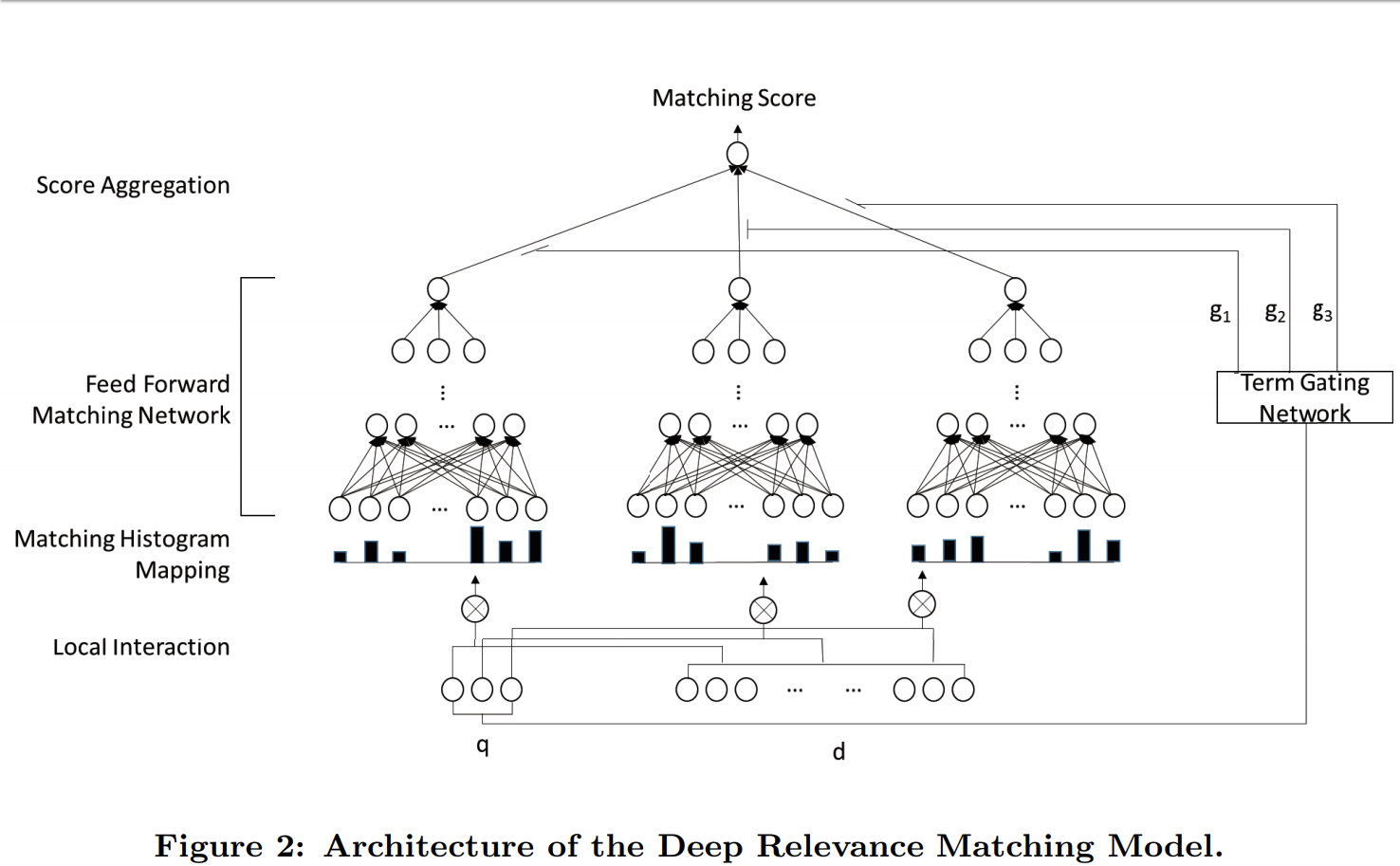

To help people understand the difference between neural matching & RankBrain, Google told SEL: "RankBrain helps Google better relate pages to concepts. Neural matching helps Google better relate words to searches." There are a couple research papers on neural matching. The first one was titled A Deep Relevance Matching Model for Ad-hoc Retrieval. It mentioned using Word2vec & here are a few quotes from the research paper

The paper mentions how semantic matching falls down when compared against relevancy matching because:

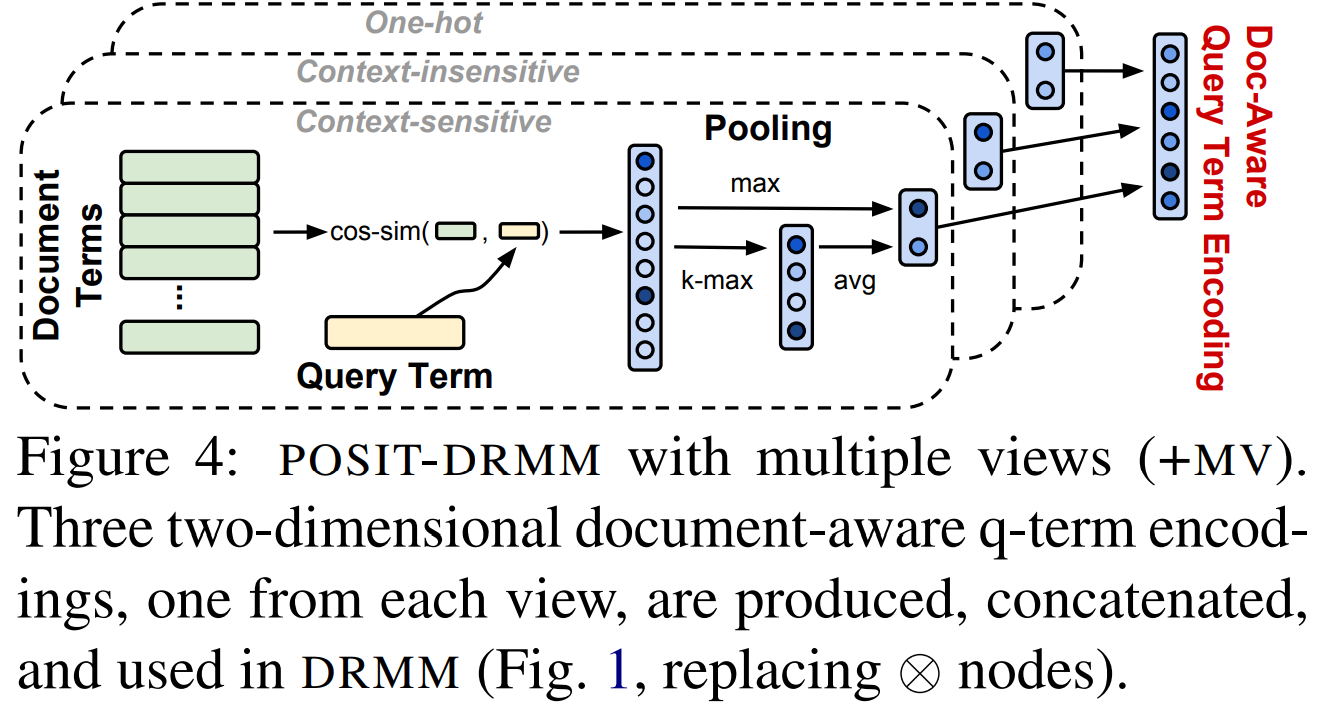

Here are a couple images from the above research paper

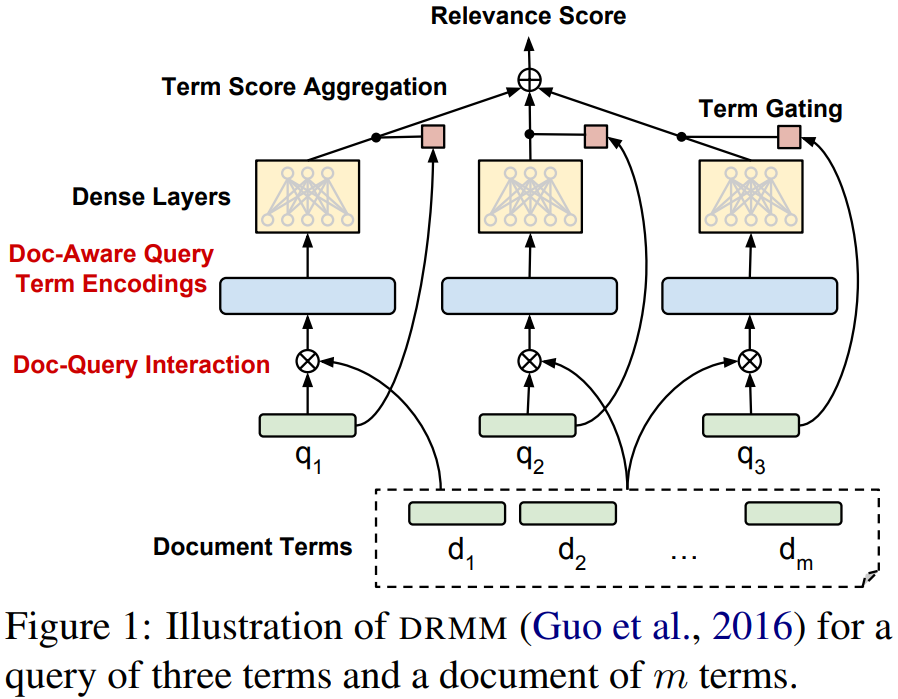

And then the second research paper is Deep Relevancy Ranking Using Enhanced Dcoument-Query Interactions That same sort of re-ranking concept is being better understood across the industry. There are ranking signals that earn some base level ranking, and then results get re-ranked based on other factors like how well a result matches the user intent. Here are a couple images from the above research paper.

For those who hate the idea of reading research papers or patent applications, Martinibuster also wrote about the technology here. About the only part of his post I would debate is this one:

I think one should always consider user experience over other factors, however a person could still use variations throughout the copy & pick up a bit more traffic without coming across as spammy. Danny Sullivan mentioned the super synonym concept was impacting 30% of search queries, so there are still a lot which may only be available to those who use a specific phrase on their page. Martinibuster also wrote another blog post tying more research papers & patents to the above. You could probably spend a month reading all the related patents & research papers. The above sort of language modeling & end user click feedback compliment links-based ranking signals in a way that makes it much harder to luck one's way into any form of success by being a terrible speller or just bombing away at link manipulation without much concern toward any other aspect of the user experience or market you operate in. Pre-penalized ShortcutsGoogle was even issued a patent for predicting site quality based upon the N-grams used on the site & comparing those against the N-grams used on other established site where quality has already been scored via other methods: "The phrase model can be used to predict a site quality score for a new site; in particular, this can be done in the absence of other information. The goal is to predict a score that is comparable to the baseline site quality scores of the previously-scored sites." Have you considered using a PLR package to generate the shell of your site's content? Good luck with that as some sites trying that shortcut might be pre-penalized from birth. Navigating the MazeWhen I started in SEO one of my friends had a dad who is vastly smarter than I am. He advised me that Google engineers were smarter, had more capital, had more exposure, had more data, etc etc etc ... and thus SEO was ultimately going to be a malinvestment. Back then he was at least partially wrong because influencing search was so easy. But in the current market, 16 years later, we are near the infection point where he would finally be right. At some point the shortcuts stop working & it makes sense to try a different approach. The flip side of all the above changes is as the algorithms have become more complex they have went from being a headwind to people ignorant about SEO to being a tailwind to those who do not focus excessively on SEO in isolation. If one is a dominant voice in a particular market, if they break industry news, if they have key exclusives, if they spot & name the industry trends, if their site becomes a must read & is what amounts to a habit ... then they perhaps become viewed as an entity. Entity-related signals help them & those signals that are working against the people who might have lucked into a bit of success become a tailwind rather than a headwind. If your work defines your industry, then any efforts to model entities, user behavior or the language of your industry are going to boost your work on a relative basis. This requires sites to publish frequently enough to be a habit, or publish highly differentiated content which is strong enough that it is worth the wait. Those which publish frequently without being particularly differentiated are almost guaranteed to eventually walk into a penalty of some sort. And each additional person who reads marginal, undifferentiated content (particularly if it has an ad-heavy layout) is one additional visitor that site is closer to eventually getting whacked. Success becomes self regulating. Any short-term success becomes self defeating if one has a highly opportunistic short-term focus. Those who write content that only they could write are more likely to have sustained success.

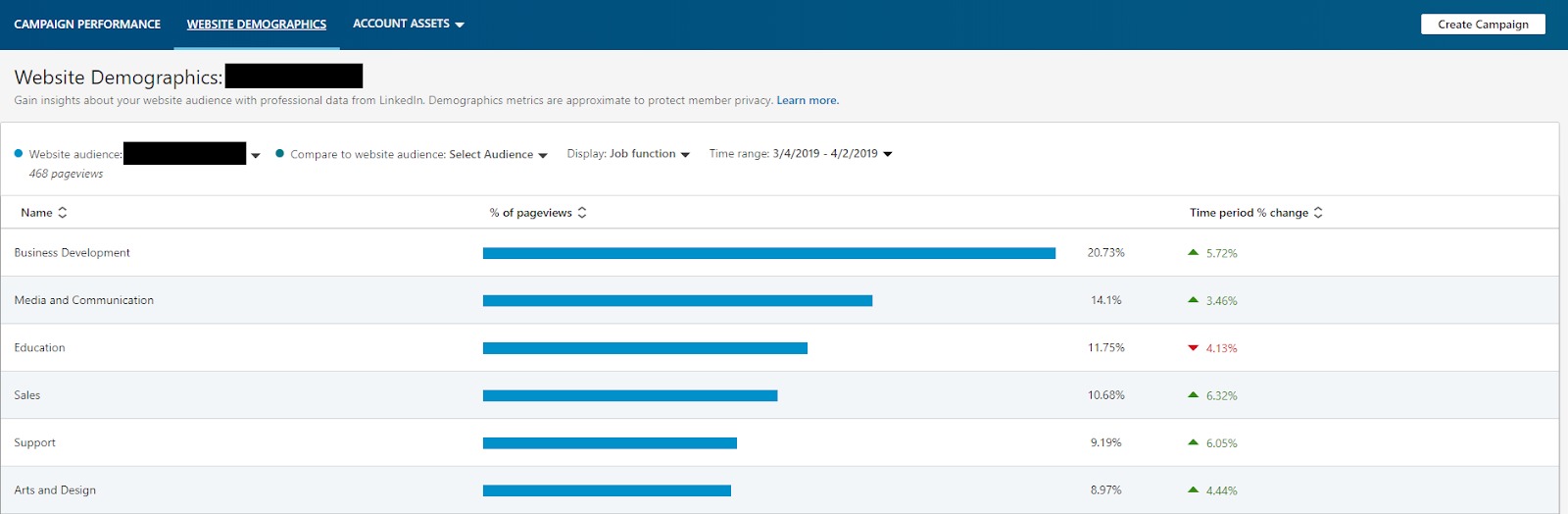

source http://www.seobook.com/keyword-not-provided-it-just-clicks from http://risingphoenixseo.blogspot.com/2019/04/keyword-not-provided-but-it-just-clicks.html When starting out a digital marketing program, you might not yet have a lot of internal data that helps you understand your target consumer. You might also have smaller budgets that do not allow for a large amount of audience research. So do you start throwing darts with your marketing? No way. It is critical to understand your target consumer to expand your audiences and segment them intelligently to engage them with effective messaging and creatives. Even at a limited budget, you have a few tools that can help you understand your target audience and the audience that you want to reach. We will walk through a few of these tools in further detail below. Five tools for audience research on a budgetTool #1 – In-platform insights (LinkedIn)If you already have a LinkedIn Ads account, you have a great place to gain insights on your target consumer, especially if you are a B2B lead generation business. In order to pull data on your target market, you must place the LinkedIn insight tag on your site. Once the tag has been placed, you will be able to start pulling audience data, which can be found on the website demographics tab. The insights provided include location, country, job function, job title, company, company industry, job seniority, and company size. You can look at the website as a whole or view specific pages on the site by creating website audiences. You can also compare the different audiences that you have created.

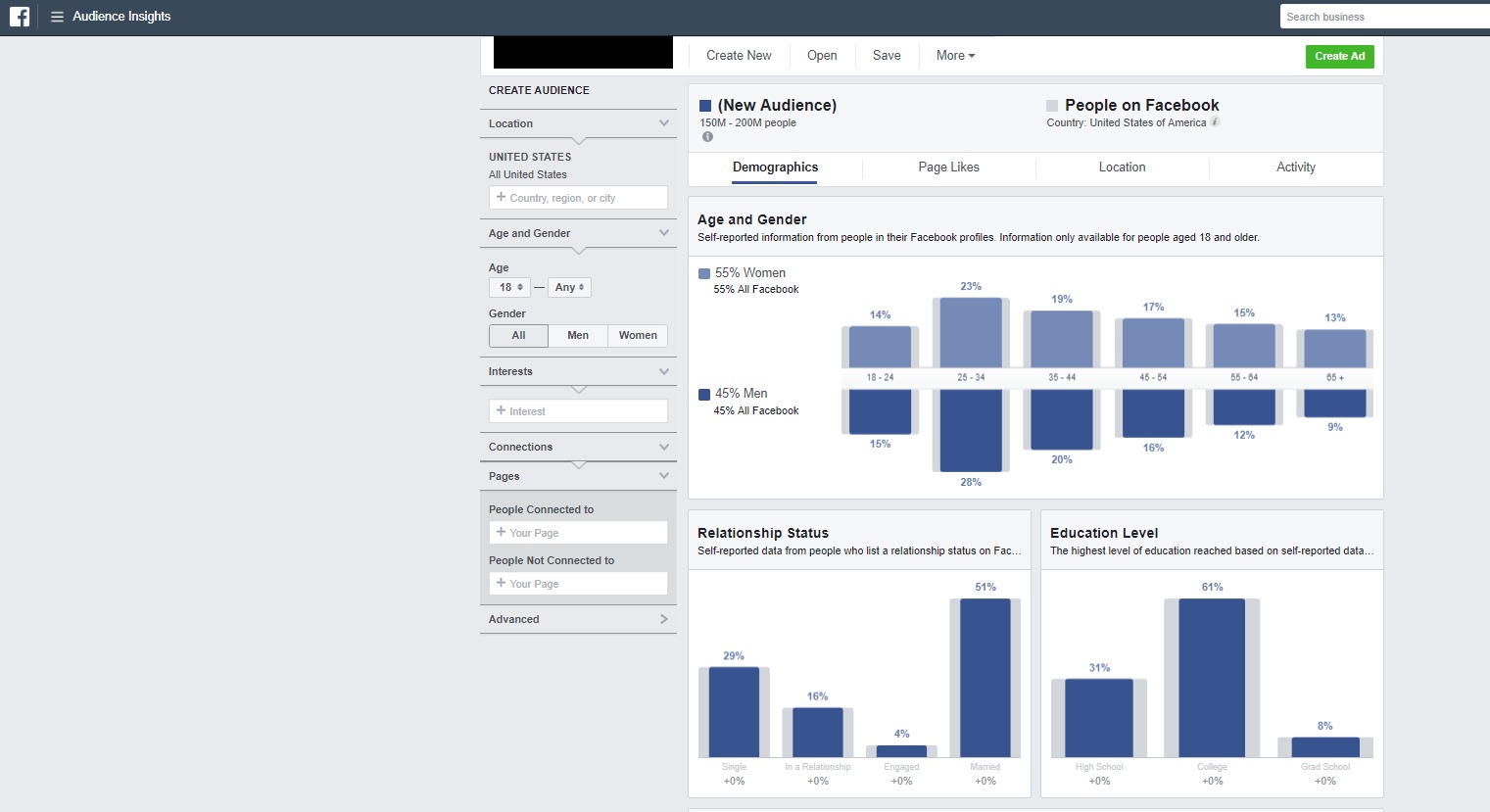

Tool #2 – In-platform insights (Facebook)Facebook’s Audience Insights tool allows you to gain more information about the audience interacting with your page. It also shows you the people interested in your competitors’ pages. You can see a range of information about people currently interacting with your page by selecting “People connected to your page.” To find out information about the users interacting with competitor pages, select “Interests” and type the competitor page or pages. The information that you can view includes age and gender, relationship status, education level, job title, page likes, location (cities, countries, and languages), and device used.

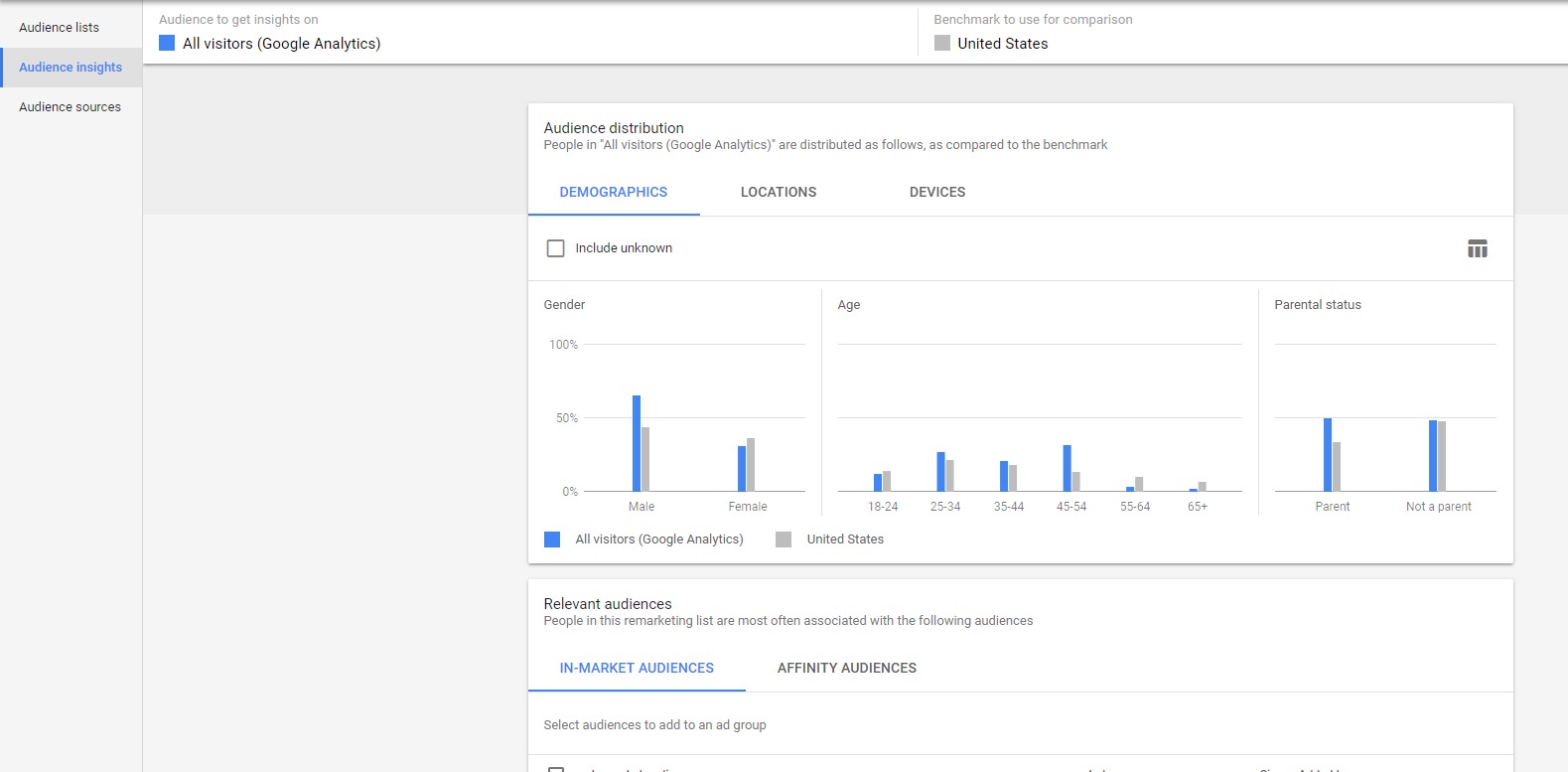

Tool #3 – In-platform insights (Google Customer Match)Google Customer Match is a great way to get insights on your customers if you have not yet run paid search or social campaigns. You can load in a customer email list and see data on your customers to include details like gender, age, parental status, location, and relevant Google Audiences (in-market audiences and affinity audiences). These are great options to layer onto your campaigns to gain more data and potentially bid up on these users or to target and bid in a separate campaign to stay competitive on broader terms that might be too expensive.

Tool #4 – External insights (competitor research)There are a few tools that help you conduct competitor research in paid search and paid social outside of the engines and internal data sources. SEMrush and SpyFu are great for understanding what search queries you are showing up for organically. These tools also allow you to do some competitive research to see what keywords competitors are bidding for, their ad copy, and the search queries they are showing up for organically. All of these will help you understand how your target consumer is interacting with your brand on the SERP. MOAT and AdEspresso are great tools to gain insights into how your competition portrays their brand on the Google Display Network (GDN) and Facebook. These tools will show you the ads that are currently running on GDN and Facebook, allowing you to further understand messaging and offers that are being used. Tool #5 – Internal data sourcesThere might not be a large amount of data in your CRM system, but you can still glean customer insights. Consider breaking down your data into different segments, including top customers, disqualified leads, highest AOV customers, and highest lifetime value customers. Once you define those segments, you can identify your most-desirable and least-desirable customer groups and bid/target accordingly. ConclusionWhether you’re just starting a digital marketing program or want to take a step back to understand your target audience without the benefit of a big budget, you have options. Dig into the areas defined in this post, and make sure that however you’re segmenting your audiences, you’re creating ads and messaging that most precisely speak to those segments. Lauren Crain is a Client Services Lead in 3Q Digital’s SMB division, 3Q Incubate. The post Five tools for audience research on a tiny budget appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/04/08/five-tools-for-audience-research-on-a-tiny-budget/ from http://risingphoenixseo.blogspot.com/2019/04/five-tools-for-audience-research-on.html Brian McCullough, who runs Internet History Podcast, also wrote a book named How The Internet Happened: From Netscape to the iPhone which did a fantastic job of capturing the ethos of the early web and telling the backstory of so many people & projects behind it's evolution. I think the quote which best the magic of the early web is

The part I bolded in the above quote from the book really captures the magic of the Internet & what pulled so many people toward the early web. The current web - dominated by never-ending feeds & a variety of closed silos - is a big shift from the early days of web comics & other underground cool stuff people created & shared because they thought it was neat. Many established players missed the actual direction of the web by trying to create something more akin to the web of today before the infrastructure could support it. Many of the "big things" driving web adoption relied heavily on chance luck - combined with a lot of hard work & a willingness to be responsive to feedback & data.

The book offers a lot of color to many important web related companies. And many companies which were only briefly mentioned also ran into the same sort of lucky breaks the above companies did. Paypal was heavily reliant on eBay for initial distribution, but even that was something they initially tried to block until it became so obvious they stopped fighting it:

Here is a podcast interview of Brian McCullough by Chris Dixon. How The Internet Happened: From Netscape to the iPhone is a great book well worth a read for anyone interested in the web.

Categories:

source http://www.seobook.com/how-internet-happened-netscape-iphone from http://risingphoenixseo.blogspot.com/2019/04/how-internet-happened-from-netscape-to.html Last month Google invited a select number of users and journalists to try out its new augmented reality functionality in Google Maps. It is an exciting development particularly for those who have ever experienced difficulty finding a location in the real world when Google Maps’ blue dot fails to be accurate enough. But with local and hyperlocal SEO becoming a bigger consideration for businesses seeking to be visible in mobile search, are there implications here too? How Google is bringing AR to its maps navigationBack in February, Google invited a small number of local guides and journalists to try out the new AR functionality in its Google Maps service. As is detailed over on the Google AI blog, they are calling the technique “global localization”. It aims to make Google Maps even more useful and accurate by building on its GPS and compass powered blue dot with added visual positioning service (VPS), street view, and machine learning. The result incorporates AR and map data. When planning a route from A to B, users can use the smartphone lens to see the world in front of them with Google Maps’ location arrows and labels overlaid.

ChallengesAccuracy and orientation have always been the biggest challenge for real-time mobile mapping. GPS can be distorted by buildings, trees, and heavy cloud cover. Orientation is possible because mobile devices are smart enough to measure the magnetic and gravity field of the earth relative to the motion of the device. But in practice, these measurements are very prone to errors. With VPS, street view, and machine learning added to the Google Maps’ route-planning functionality, the early reports are very positive. VPS works in tandem with the street view to analyze key structures and landmarks in the field of view. It is pretty much the same way we as humans process what we are seeing in relation to what we expect to see. Once it calibrates, the AR functionality presents the relevant route markers (arrows and labels) to show the user where to go. Of course, there are challenges with this added technology too. The real world changes. Structures come and go, which can make it difficult for VPS to marry up what it sees, with what street view has indexed. This is where machine learning comes in, with data from current imagery being used to update, build upon, and improve what Google Maps already knows. An additional challenge also concerns user habits. But Google has been quick to build in prompts to stop users from walking too far while looking through the AR lens minimizing any potential risk from real-world hazards (vehicles, people, structures, and the others) outside of the camera’s view. Implications for searchWhen its AR functionality is rolled out, it’s not hard to see that Google Maps will have added appeal for users. This is building on an already massively popular tool. According to data from The Manifest, 70% of navigation app users use Google Maps. As a proportion of the 2.7 billion global smartphone user base, this is around 1.4 billion people. We’ve written at length here at Search Engine Watch about the importance of local and hyperlocal search. If you have a real-world location for your business and you have not already done so, setting up a “Google My Business” profile is the first step to assisting Google Maps with knowing exactly where your business location is. In addition, it also improves how you are presented in local search elements such as three-pack listings, as well as giving the user clear opening time information, and the option to click-to-call. This is obviously important as more people come to Google Maps to use the AR functionality. On a hyperlocal level, as Google Maps’ AR looks to make increasing use of non-transient structures and landmarks, it might be good practice for businesses to make it more of a point to rank for key terms related to these landmarks. It is also easy to see how important it is to consider how your business looks from a joined-up marketing/UX perspective, with questions such as:

A positive step for users and SEOsAs a Google Maps user, this AR functionality is very exciting and I believe that it could have positive implications for SEO too. For businesses who have real-world locations, Google Maps is already a hugely important way for potential customers to discover you and pay a visit. AR is a fascinating arena of digital technology, and we have already seen its impact through games such as “Pokemon GO“ and other AR mapping tools that are popular with users. Ultimately, in as much as search and navigation apps are lenses through which it pays to be visible, it seems AR will prove to be just as important when this technology is rolled out publicly. Luke Richards is a writer for Search Engine Watch and ClickZ. You can find him on Twitter at @myyada. The post Google tests AR for Google Maps: Considerations for businesses across local search, hyperlocal SEO, and UX appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/03/29/google-tests-ar-for-google-maps-considerations-for-businesses-across-local-search-hyperlocal-seo-and-ux from http://risingphoenixseo.blogspot.com/2019/04/google-tests-ar-for-google-maps.html In the first article of my luxury search marketing series, I discussed the consumer mindset in the luxury vertical. I provided insight into what motivates luxury shoppers and what drives them to purchase. In the second article, I’ll build upon that foundation and explore how to craft SEO strategies that enable luxury marketers to maximize results in this highly competitive space. This article’s SEO recommendations address “on-page” ranking factors. Moz defines “on-page SEO” as optimizing both the content and HTML source code of the webpage. Prioritizing on-page SEO will help luxury marketers increase their organic search visibility by (1) Improving search engine rankings, and (2) By driving traffic to their website. Read also: 10 on-page SEO essentials: Crafting the perfect piece of content Unfortunately, the work doesn’t stop once you have great on-page SEO. As I explained in my first article, consumers often purchase luxury goods to satisfy an emotional need. So, to truly maximize conversions, luxury marketers should deliver an emotionally fulfilling shopping experience. I’ll share some ideas on how to do this with high-quality content. 1. Understand keyword intent and get your brand in front of the right buyersIt is critical to understand the intent behind customers’ search behavior. You need to understand what they want in order to effectively optimize your website and create a solid foundation for a content strategy. Keyword research, which involves strategically analyzing intent, will enable you to understand consumers’ specific needs and how you should be targeting those searchers. There are three basic types of search intent:

Putting it into practiceHow do you know if your website is addressing your customer’s intent? Start by evaluating your keyword targeting. Look beyond search volume and ask yourself if your keyword targeting matches the search intent. For example, if your page is informational in nature, is the term you are targeting and optimizing for consistent with an informational-based keyword search? Manually check the search results to ensure that the keyword and page you are targeting is a right fit for what’s appearing in the search results. Read also: How to move from keyword research to intent research 2. Invest in your meta description to win the clickAlthough meta descriptions have not been a direct ranking factor since 2009, click-through rate can impact your website’s pages’ ability to rank. Given this, marketers need to continue to invest in meta descriptions. Although custom meta descriptions are more work (especially when you’re dealing with ecommerce sites where content frequently changes), it’s worth the effort you put in to get the click. How do you write a stellar meta-description? Here are a few tips. 1. Prioritize your evergreen pagesEvergreen pages are those pages where the page itself stays the same, even though the content may change slightly over time. These are your main landing pages, specifically your homepage and category level pages, such as “designer collections” or “jewelry & accessories” where most of your traffic comes from. Even if the content changes slightly, these pages will have the chance to build up equity/credibility within the search engines so make sure you nail the meta description. 2. Paint a pictureIn my first article, I explained how many consumers purchase luxury goods to fulfill emotional needs. Use the meta description as an opportunity to address those needs and create an experience. You can do this with visually appealing descriptions that make great use of action verbs. Action verbs deliver important information and add impact and purpose. The click-through rate improved by almost 2% on a page my team optimized using more descriptive copy. Some examples are:

3. Create urgency with your calls-to-actionIn my first article, I also discussed the importance of communicating exclusivity when promoting luxury products. Use the meta description as a way to create a “fear of missing out” with your call-to-action. Some examples are:

4. Make sure it fitsBe mindful of character limits. Make sure you stay within 150 to 160 characters, otherwise your description will likely be cut off in search results. It doesn’t provide the user with a good experience when a key part of your message is missing. 5. Hire a professional copywriterIf you are struggling with writing creative and compelling descriptions, I strongly recommend working with a professional copywriter, especially for your website’s key pages. Good copywriters can add the magic touch to your meta descriptions. Read also:

Putting it into practiceConduct an honest assessment of your meta descriptions. Is this something you would click on for more information? Winning the click can help improve your click-through rate, and as a result, your SEO ranking position. More importantly, it can help improve your conversion rate which translates into sales and more money earned. And don’t forget to take stock of what your competitors are doing. Are they winning the click because they are using more creative descriptions, and more enticing, urgent calls to action? 3. Create emotionally fulfilling and relevant content that reiterates the urgencyWe’ve talked a lot about the importance of emotionally fulfilling content in luxury marketing. So, what exactly qualifies as emotionally fulfilling content? What type of content or shopping experience is going to trigger that dopamine hit that makes us feel good and go back for more? In its most basic sense, emotionally fulfilling content is content that makes you feel something. Think about a story that you love. Do you remember how it felt to be totally immersed in the story? If it were a book, you couldn’t put down. Or if it were a TV show that you had to binge-watch for the entire series, you had to keep watching because you couldn’t get enough. That’s the type of content I’m talking about. It’s content that leaves you feeling satisfied, content, and engaged. This type of content fulfills our high-level needs as we discussed in the first article. Buying that Fendi handbag, or Rolex watch, can give us the confidence we need and appeal to our sense of belonging. We connect with stories, especially stories we can relate to. Chanel does a great job with this type of website content. I’m a Chanel brand fan and a jewelry lover, so Chanel’s 1.5, 1 Camelia. 5 Allures resonates with me. Chanel creates an experience that you can truly immerse yourself in. Consumers aren’t the only ones who love good contentFor years Google has been stressing the importance of high-quality content. This type of content is written for the user, not the search engine, but we know that the engines tend to reward strong content with an increase search engine ranking position. In addition to strong content, the use of urgency elements and descriptive calls-to-action are powerful ways to drive conversions. How often have you scrolled through a website to find your desired product with a “limited quantity – only three left!” label. That’s a powerful motivator that pushes consumers to drive in-the-moment purchases. Leveraging the “fear of missing out” is a powerful tactic that can be applied to products to help drive conversions. Lyst had a 17% conversion rate increase when they showed items on product pages that were selling quickly. You can create urgency in a few different ways:

Putting it into practiceSpend some time examining your content. Is it emotionally fulfilling and relevant enough for your customer? Is this something you would be interested in? If not, what can you do to improve it? Content that is emotionally fulfilling and relevant often tells a story and keeps your users coming back for more. Remember, Google tends to reward this type of content with increased search engine rankings. Also, consider how you can incorporate urgency elements onto specific pages. Think in terms of quantity, time, and context. Final thoughtsContent that’s relevant and creates an emotionally fulfilling experience for the user should be at the heart of any luxury brand’s marketing campaign. We crave this content because of the experience that it provides for us and how it makes us feel. Don’t forget about the dopamine connection! The foundation of your SEO campaign should start with keyword intent research. It’s not just enough to target search volume alone, you must balance that with user intent. Finally, invest in your meta description by creating something that’s truly enticing that makes people want to click through, learn more about your brand, and get them to convert. In the final article in the series, we’ll tie everything together and discuss integrating search marketing with other channels in the luxury goods industry. Stay tuned! Jennifer Kenyon is a Director of Organic Search at Catalyst (part of GroupM). She can be found on Twitter @JennKCatalyst. The post Luxury marketing search strategy, Part 2: Strategies and tactics appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/04/03/luxury-marketing-search-strategy-series/ from http://risingphoenixseo.blogspot.com/2019/04/luxury-marketing-search-strategy-part-2.html As SEOs we know outreach for backlinks has to be done in order to give the websites we are working on the backlink authority it needs. With backlinks being one of the top three ranking factors (depending on which study you’re reading) there is definite value in doing outreach. Although this is made a lot harder thanks to the mysterious world of black hat SEO. Webmasters out there are savvier to the tactics of sending a generic feeling email, and on our part, it’s a lot of work for usually not much return. This is why I’ve collated a list of some of the ninja backlink outreach tactics I’ve found which work great for most sites. At the heart of most of these techniques is some good exciting content to make your website stand out, as backlinks and content work hand in hand. So let’s get going and earn our black belt in building backlinks. (Apologies, there will be a few more bad ninja related jokes in this post.) Six of the best ways to build backlinks1. Sponsoring a college or university teamOk so before we get into this first one it does involve a bit of money on yours or your clients part to get the backlink. Although this would usually cost a few hundred pounds to sponsor the team and all you’re asking in return is a backlink from their team page which hopefully if you’ve done the research right will be on a .ac.uk or .edu domain which will naturally have high authority. 2. Skyscraper techniqueThis one is borrowed from Brian Dean from Backlinko, and you can get more details from him on this here. In essence, this approach involves finding the top piece of ranking linkable content you can find for a search term you’re trying to rank for. Then you build on that idea to make a better version of the piece and reach out to the right people to gain the right exposure. If someone has created content on the “best top 10 ways to be a ninja” you can go and make a post of the “top 11 ways to be a ninja”. It is key to this technique to make sure that you find the right area and do the correct research, so if you’re working on behalf of a client get their input then do your own research to back up their knowledge. 3. Interview with someone on your websiteYes, this is a way to get backlinks by doing the bulk of the work on your own website. Find an influential person in your industry and convince them to give you an interview for your website. Unless you’re running a website all about celebs, most people within any industry will be flattered that you would want to interview them. The only stipulation to this is that they will need to have some type of following on social media. This is as it will be as much in their interest to share the content as yours. So once you have the interview share it with your PR and social team, and allow them to share the piece as much as they can, hopefully, you will get some valuable industry related backlinks from this. 4. Create a free toolFree tools are a great way to gain backlinks, all you have to do is look at the SEO industry for this and find “top free SEO tools” posts to see a list of free tools, some of which have only been created to build authoritative backlinks naturally. These do not need to be anything fancy only something that serves the user. For examples, a mortgage broker creating a mortgage calculator is a simple solution to get targeted traffic on their site and gain backlinks from sites related to mortgages. 5. Create your own dataThere is a lot to be said about the impact of data and how this can be used to gain backlinks. Although, what if you have no interesting data you can share to get out into the news? Well, there are ways you can create your own data. There are great sites like Google Surveys where you can ask a set questions to a specified number of people and get back true related data based on your own parameters. Although — it is what you do with data that counts in gaining backlinks for this technique, once you have completed the post on your website with a snappy clickbait headline. Head over to Reddit and find the most relevant subreddits you can and post your content anonymously to see if it gets picked up. In case it fails to get picked up by any sites, get on Twitter and start contacting local and industry press journalists. Soon enough someone will pick it up. 6. Video transcriptsSo this final technique takes its inspiration from Moz’s whiteboard Fridays we all know and love. On every video, it is accompanied by a transcript of the video. As Moz knows, Google finds it very difficult to understand the context of videos, so they provide HTML text that gives a much clearer indicator to Google. This makes their life much easier. How can you use this to your advantage exactly? Well, all you have to do is find some recent video content from an expert or influencer in your field. Check their site to see if the content is accompanied with a transcript of the video, if not then jackpot! From there create a transcript for the video which is on your own or client’s website. The last steps involve a quick buttering up of the influencer on Twitter. It could be something along the lines of “Loved the last video, you’re amazing. I have created a transcript for the video if it is useful for anyone, the link is here.” Hopefully, they’ll give a retweet and with the shares of their content comes some shares and backlinks for you. ConclusionHope the whole ninja theme wasn’t too cringy. The main point is that, yes link building is much harder than it used to be a few years ago. And everyone is so tuned out to an email asking for a backlink that they’re just going to ignore them. Still, backlink building can be done. Just think outside the box, be a bit sneaky like a ninja, get creative, and make the best quality content you can for your users. Mark Osborne is the SEO Manager at Blue Array, with a passion for keeping up to date on the latest goings-on in the SEO world. He can be found on Twitter @MarkSEOsborne. For more on backlinks, read:

The post Doing backlink building like a ninja: Six best techniques appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/03/27/backlink-building-six-best-techniques/ from http://risingphoenixseo.blogspot.com/2019/04/doing-backlink-building-like-ninja-six.html Back in September 2018, Google launched its Dataset Search tool, an engine which focuses on delivering results of hard data sources (research, reports, graphs, tables, and others) in a more efficient manner than the one which is currently offered by Google Search. The service promises to enable easy access to the internet’s treasure trove of data. As Google’s Natasha Noy says,

For SEOs, it certainly has potential as a new research tool for creating our own informative, trustworthy, and useful content. But what of its prospects as a place to be visible, or as a ranking signal itself? Google Dataset Search: As a research toolAs a writer who has been using Google to search for data since about a decade, I’d agree that finding hard statistics on search engines is not always massively straightforward. Often, data which isn’t the most recent ranks better than newer research. This makes sense in an SEO sense, that which was published months or years prior has had a long time to earn authority and traffic. But usually I need the freshest stats, and even search results pointing to data on a page that has been published recently doesn’t necessarily mean that the data contained in that page is from that date. Additionally, big publications (think news sites like the BBC) frequently rank better than the domain where the data was originally published. Again, this is unsurprising in the context of search engines. The BBC et al. have far more traffic, authority, inbound links, and changing content than most research websites, even .gov sites. But that doesn’t mean to say that the user looking for hard data wants to see BBC’s representation of that data. Another key issue we find when researching hard data on Google concerns access to content. All too regularly, after a bit of browsing in the SERPs I find myself clicking through only to find that the report with the data I need is behind a paywall. How annoying. On the surface, Google Dataset Search sets out to solve these issues.

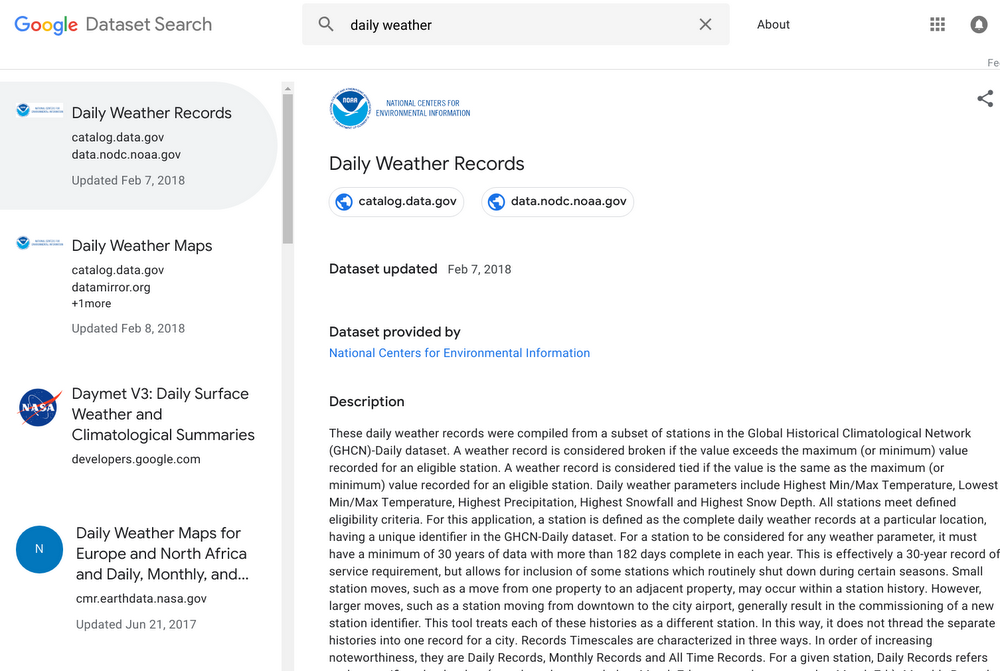

A quick search for “daily weather” (Google seems keen to use this kind of .gov data to exemplify the usefulness of the tool) shows how the service differs from a typical search at Google.com. Results rank down the left-hand side of the page with the rest of the SERP real estate given over to more information about whichever result you have highlighted (position one is default). This description portion of the page includes:

By comparison, a search for the same keyphrase on Google in incognito mode prioritizes results for weather forecasts from Accuweather, the BBC, and the Met Office. So to have a search engine which focuses on pure, recorded data, is immediately useful. Most results (though not all) make it clear to the user as to when the data is from and what the original source is. And by virtue of the source being included in the Dataset Search SERPs, we can be quite sure that a click through to the site will provide us access to the data we need. Google Dataset Search: As a place to increase your visibilityAs detailed on Google’s launch post for the service, Dataset Search is dependent on webmasters marking up their datasets with the Schema.org vocabulary. Broadly speaking, Schema.org is a standardized way for developers to make information on their websites easy to crawl and understandable by search engines. SEOs might be familiar with the vocabulary if they have marked up their video content or other non-text objects on their sites. For example, whether they have sought to optimize their business for local search. There are ample guidelines and sources to assist you with dataset markup (Schema.org homepage, Schema.org dataset markup list, Google’s reference on dataset markup, and Google’s webmaster forum are all very useful). I would argue that if you are lucky enough to produce original data, it is absolutely worth considering making it crawlable and accessible for Google. If you are thinking about it, I’d also argue that it is important to start ranking in Google Dataset Search now. Traffic to the service might not be massive currently, but the competition to start ranking well is only going to get more difficult. The more webmasters and developers see potential in the service, the more it will be used. Additionally, dataset markup will not only benefit your ranking in Dataset Search it will also increase your visibility for relevant data-centric queries in Google too. An important point as we see tables and stats incorporated more frequently and more intuitively in elements of the SERPs such as the Knowledge Graph. In short:

Google Dataset Search: As a ranking signalThere is a good reason to believe that being indexed in Dataset Search will be a ranking signal in its own right. Google Scholar, which indexes scholarly literature such as journals and books has been noted by Google to provide a valuable signal about the importance and prominence of a dataset. With that in mind, it makes sense to think a dataset that is well-optimized with clear markup and is appearing in Dataset Search would send a strong signal to Google. This would signal that the respective site is a trusted authority as a source of that type of data. Thoughts for the futureIt is early days for Google Dataset Search. But for SEO, the service is already certainly showing its potential. As a research tool, its usefulness really depends on the community of research houses who are marking up their data for the benefit of the ecosystem. I expect the number of contributors to the service will grow quickly making for a diverse and comprehensive data tool. I also expect that the SERPs may change considerably. They certainly work better for these kinds of queries than Google’s normal search pages. But I had some bugbears. For example, which URL am I expected to click on if a search result has more than one? Can’t all results have publication dates and the time period the data covers? Could we see images of graphs/tables in the SERPs? But when it comes to potential as a place for visibility and a ranking signal, if you are a business that collects data and research (or you are thinking about producing this type of content), now is the time to ensure your datasets are marked up with Schema.org to beat your competitors in ranking on Google Dataset Search. This dataset best practice will also stand you in good stead as Google’s main search engine gets increasingly savvy with how it presents the world’s data. Luke Richards is a writer for Search Engine Watch and ClickZ. You can follow Luke on Twitter at @myyada. The post Google Dataset Search: How you can use it for SEO appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/04/02/using-google-dataset-search-seo/ from http://risingphoenixseo.blogspot.com/2019/04/google-dataset-search-how-you-can-use.html Hubspot found that 80% of marketing professionals lean on visual content in their social media marketing. It makes enormous good sense to assert that social media marketing is moving towards a direction where image and video content shall play pivotal roles. Traditionally, content marketing has performed three roles:

There are statistics that provide mounting evidence to the vitality of leveraging a visual content marketing strategy. Key insights on the changing face of visual content marketing

How do images and video content create value for brands on social media?Prima facie, visual content augments value creation on social media in the following ways:

Challenge of leveraging visual content successfully for social media marketingAccenture Interactive had conducted a survey on a sample of more than 1000 consumers that sought to objectively assess their tastes and preferences, habits, likes and dislikes of content consumption, and thus, identify patterns and insights on visual content marketing and consumption. The findings submitted as part of the Accenture Interactive research stated that visual content and especially video is still considered invasive by a large chunk of people. The findings stated on page six of the report published clearly asserts that 35% of users find the use of video content for advertising inconvenient and invasive as opposed to 26% users that stated they prefer to see video advertisements. Features of an awesome visual content design tool for social media marketingGiven the importance of visual content in the context of social media marketing and the abundance of design tools for creating visual content for the aforesaid purpose, it is worthwhile to explore the features, functionality, and performance parameters that make for a great design tool. Other important aspects like ease of use, pricing, integration with other social media apps, and the spectrum of content prototypes that can be created using these design tools including but not limited to infographics, graphs, pie charts, bar charts, and scatter diagrams. Some of the major parameters in assessing the merit of a great design tool for creating visual content are as follows:

The best visual content design tools for social media marketingWith the knowledge of the parameters that you got to be focusing on, from the perspective of visual content creation for social media marketing, it is easy to identify a list of the best such design tools that are available and in demand for the said job. Have a look. 1. BannersnackMaking it to the list of the top design tools for image content creation is Bannersnack. Immensely popular with social media marketing professionals and graphic designers, the online banner maker offers the following features:

2. VenngageSecond on our list of online banner makers is Venngage. Offering hundreds of charts, maps, and icons to create infographics for perfect data visualization, this online banner maker is highly used for making infographics. Venngage offers the following features listed below:

3. VismeThird on the list of the best visual content creation tools is the online banner maker from Visme. The online banner maker is highly popular among graphic designers and marketing professionals. It offers a host of features, functionalities, and end user benefits as listed below:

4. FotorFourth on our list of the top visual content creation tools for use in social media campaigns is Fotor. With probably one of the most diverse and widest arrays of templates for creating image content, Fotor offers great multi-purpose functionality that embraces both the online and onsite business ecosystems. Fotor offers the following features to users:

5. My Banner MakerFifth in our list of the best visual content creation tools is My Banner Maker. An easy to use online banner maker, My Banner Maker stands out in the list for its professional turnkey assistance and professional collaboration in addition to the technology suite that it offers. Some of the major features that My Banner Maker offers are as follows:

In the final diagnosis, it is only humble to take cognizance of the ever-increasing influence of visual content in the context of social media campaigns and the opportunities that brands can look forward to leveraging. While this list is by no means exhaustive and exclusive, the above mentioned visual content creation tools make it to our list on parameters of user experience, custom design abilities, and pluralism of deployment across digital platforms. Here is wishing your brand all the luck to reinvent the future of social media marketing by creating some stunning visuals this year. Birbahadur Singh Kathayat is an Entrepreneur, internet marketer and Co-founder of Lbswebsoft. He can be found on Twitter @bskathayat. The post Visual content creation tools for stunning social media campaigns appeared first on Search Engine Watch. source https://searchenginewatch.com/2019/04/02/visual-content-creation-tools-social-media/ from http://risingphoenixseo.blogspot.com/2019/04/visual-content-creation-tools-for.html |

RSS-flöde

RSS-flöde